How Percona Toolkit Helped Us Achieve Zero-Downtime Schema Changes

At Eshopbox, database schema changes used to be one of the most dreaded activities. Even a small DDL operation would mean:

Stopping all crons before the change and restarting them after — pulling in multiple teams at odd hours.

Downtime that scaled with table size — sometimes hours.

Replication lag nightmares — master-slave sync issues would pop up even after the schema change was complete.

Clearly, this was not scalable.

That’s when we discovered Percona Toolkit, specifically the pt-online-schema-change utility.

Why Percona Toolkit?

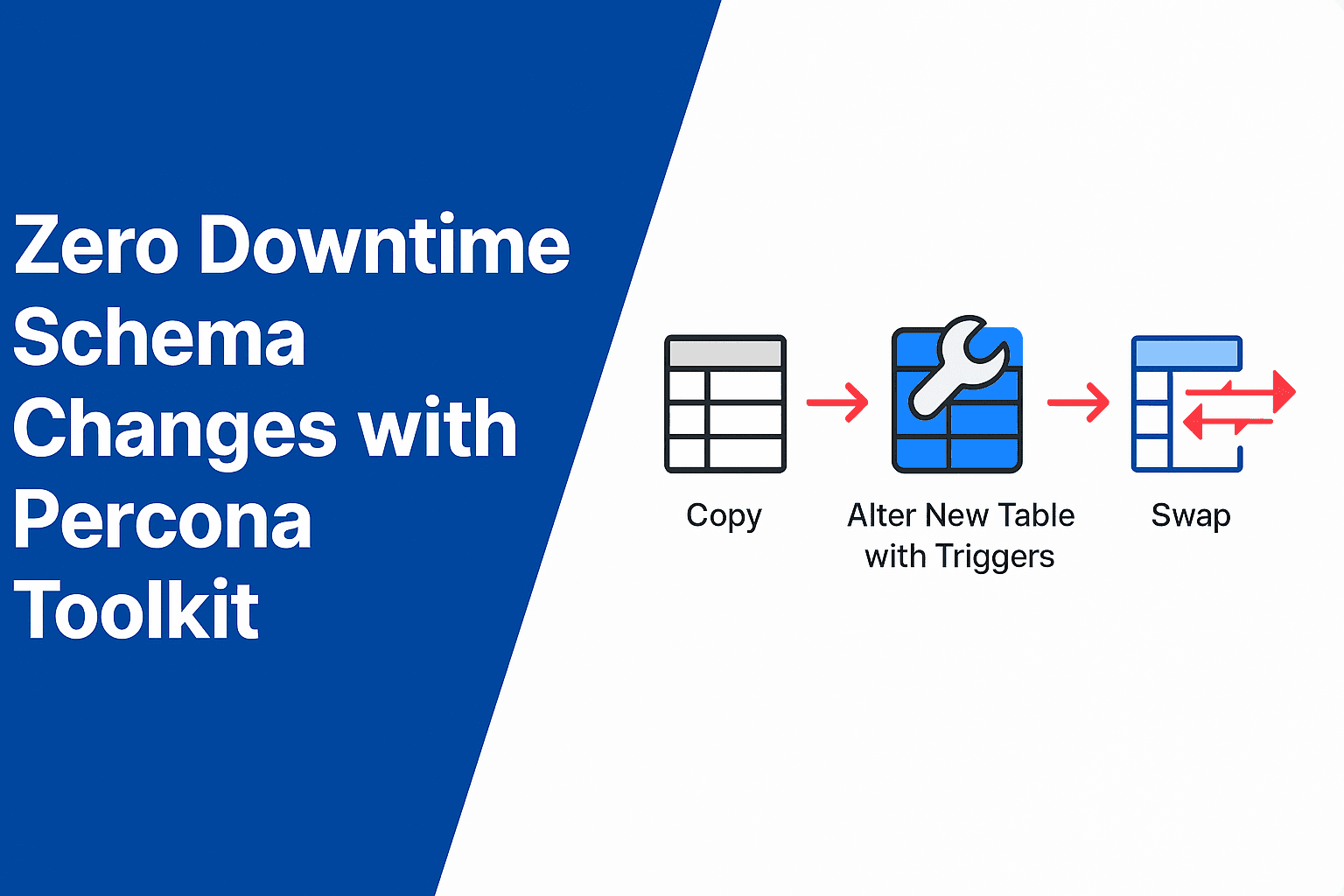

The magic of pt-online-schema-change is simple but powerful:

It creates a copy of the target table.

Applies the schema change (ALTER, INDEX, etc.) on the copy.

Sets up triggers to replicate ongoing writes to both tables.

Once ready, swaps the old table with the new one, transparently.

The result? No downtime, even for large tables.

Challenge: How Do We Test This?

The tricky part: staging traffic ≠ production traffic.

Our staging had crons running, but nowhere near enough user traffic to replicate real-world conditions.

We needed to test how the schema change behaves with continuous inserts and updates.

The solution:

We used Postman scripts to generate artificial traffic, continuously inserting rows into the target table.

We bulk-inserted dummy data to simulate a large dataset closer to production.

This gave us confidence that the tool would behave as expected under load.

Step-by-Step: How We Did It

1. Create a VM Instance

We provisioned a dedicated VM for running Percona Toolkit:

Machine:

e2-standard-2(2 vCPUs, 8 GB Memory)OS: Ubuntu 22.04.5 LTS

Storage: 20 GB

Labels for easy identification

This ensures a controlled, isolated environment for running schema changes without affecting app servers.

2. Install and Configure Cloud SQL Proxy

Since our MySQL database runs on GCP Cloud SQL, direct connections aren’t always straightforward.

We installed Cloud SQL Proxy to establish a secure tunnel and access the DB via localhost.

This step is critical for:

Security (no exposing DB IPs publicly).

Convenience (simpler connection strings).

3. Set Database Flag

We enabled:

log_bin_trust_function_creators = ON

Why?

Percona Toolkit creates triggers and temporary functions. By default, Cloud SQL blocks such operations unless this flag is enabled. Without it, the tool fails. You can also do the same from the Google Cloud console interface.

4. Prevent Session Timeout with tmux

Schema migrations can run for hours depending on table size. If your SSH session dies, you don’t want the migration to die with it.

That’s why we ran Percona Toolkit inside tmux:

tmux new -s ptosc

Detach (

Ctrl + B, D) to keep it running.Reattach anytime with

tmux attach -t ptosc.

This small step saved us a lot of anxiety.

5. Run Schema Change with pt-online-schema-change

Here’s the exact command we ran (staging first, then production):

pt-online-schema-change \

--alter "ADD INDEX idx_return_status_updated_at (return_status, external_updated_at)" \

--charset=latin1 \

--no-version-check \

--recursion-method=none \

--chunk-time=2 \

--chunk-size-limit=2000 \

--max-load "Threads_running=30" \

--critical-load "Threads_running=40" \

--max-lag=10 \

--check-slave-lag h=<replica-host>,P=3306,u=<user>,p=<password> \

--check-interval=5 \

--progress time,10 \

--alter-foreign-keys-method=auto \

--preserve-triggers \

--pause-file=/tmp/ptosc.pause \

--execute \

--set-vars "lock_wait_timeout=600,innodb_lock_wait_timeout=60,wait_timeout=28800,interactive_timeout=28800,net_read_timeout=600,net_write_timeout=600,max_allowed_packet=1073741824" \

h=<master-host>,P=3306,u=<user>,p=<password>,D=eshopbox_wms_production,t=return_shipment_status_logs

Explaining Key Flags

Here’s what each flag does (and why we used it):

--alter→ The schema change. In our case, adding a composite index.--charset=latin1→ Matches our table’s charset to avoid encoding mismatches.--no-version-check→ Skips version check to avoid interruptions (useful in CI/CD).--recursion-method=none→ Disables automatic slave discovery; we explicitly provided replica host.--chunk-time=2→ Each data chunk should take ~2s. Balances migration speed vs. load.--chunk-size-limit=2000→ Prevents overly large chunks that can lock tables.--max-load "Threads_running=30"→ If DB has >30 active threads, pause migration. Protects production.--critical-load "Threads_running=40"→ If threads exceed 40, migration aborts immediately. Safety net.--max-lag=10→ If replica lag exceeds 10s, migration pauses. Ensures replication health.--check-slave-lag ...→ Host details of replica to monitor lag.--check-interval=5→ Checks system health every 5 seconds.--progress time,10→ Prints progress every 10s for monitoring.--alter-foreign-keys-method=auto→ Handles foreign keys automatically.--preserve-triggers→ Ensures existing triggers remain intact.--pause-file=/tmp/ptosc.pause→ Migration pauses if this file exists. Handy kill-switch.--set-vars ...→ Overrides MySQL system variables for smoother long-running ops.

⚖️ Tip for tuning flags:

Start with conservative values (

chunk-time,max-load,max-lag).Run in staging with traffic simulation.

Adjust iteratively based on monitoring (CPU, replication lag, query latency).

Execution on Production

After verifying everything on staging with traffic simulation, we confidently ran the exact command on production.

✅ Results:

No downtime.

Replication lag stayed within safe limits.

Teams didn’t have to pause crons or wake up at odd hours.

Lessons Learned

Always test on staging with realistic traffic.

Monitoring is key — keep an eye on replication lag, threads running, and system load.

Have a rollback plan —

--pause-fileand conservative flags saved us from surprises.Percona Toolkit is powerful, but safe only if configured properly.

What’s Next

We’re now integrating pt-online-schema-change into our database migration pipeline so that schema changes are:

Version-controlled

Tested automatically in staging

Rolled out with minimal human intervention